Bionoculars, a biomedical literature search engine

Evidence-based rigor with modern AI speed.

Tuned for biomedical research. Built and hosted in the EU.

How Bionoculars compares

| Capabilities | Traditional Search | AI Chatbots | Bionoculars |

|---|---|---|---|

| Examples | PubMed, Google Scholar | Elicit, Consensus, ChatGPT | – |

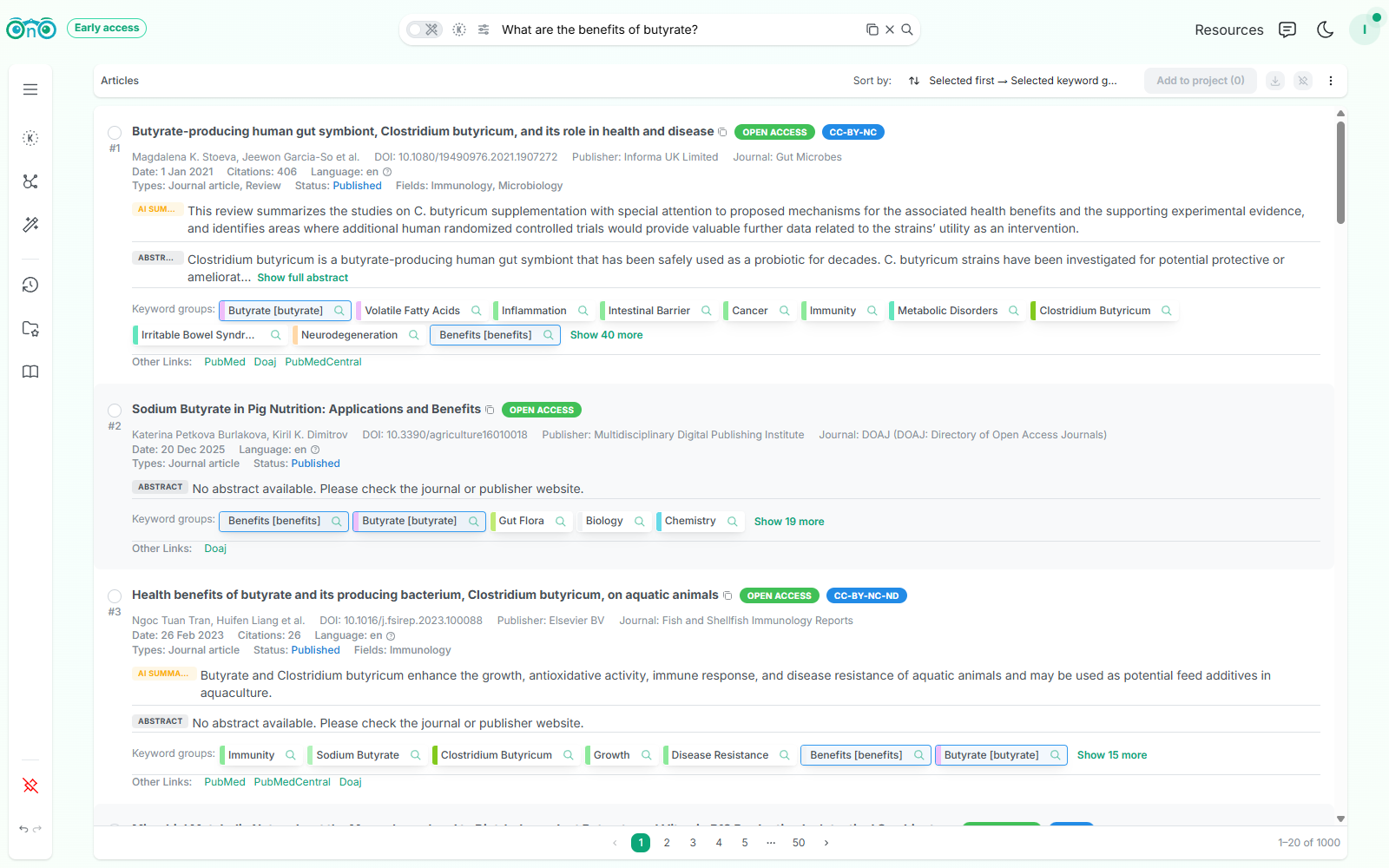

| Scope & Ranking | Exhaustive, but relies on rigid boolean formulas | Highly selective, operates behind a black box | 117M publications with visible, editable ranking signals |

| Search Intent | Demands the perfect query adapted to its search engine | Tries to guess intent from multiple messages | Develop intent iteratively via semantic keyword groups |

| Synthesis & Trust | Enormous manual effort to extract insights | Prone to hidden bias; can't verify what it considered and what it missed | AI synthesizes your article selection. Every claim is cited |

| Handling Synonyms | Requires complex manual OR/AND strings | Inconsistent; often misses niche variants | Systematic mapping using UMLS Metathesaurus and advanced semantic representation |

Your search, your way

A fast path from question to insight, with power tools when you need them.

Click any step to explore its feature.

See why each result ranks where it does

Ranking signals and index keywords are not hidden behind opaque algorithms. You can verify, adjust, and trust what you read.

See it in action

A researcher searched "calcium score in nuclear medicine". After a quick keyword refinement in Bionoculars, 7 of the top 10 results matched their intent compared to 3 with traditional search.

Loading plans...

Frequently asked questions

PubMed and Google Scholar are powerful but leave synthesis entirely to you. AI tools are fast but opaque, have their biases, and show you a limited set of articles. Bionoculars sits in the middle: AI helps you explore, prioritize, and summarize while every ranking signal and insight stays transparent and editable.